Chunking Beats Slicing: What SWE-Bench Taught Me About Code Intelligence

Mar 17, 2026

I benchmarked two approaches to code indexing. The smarter one lost.

If you recall from my last post, I've been using SWE-Bench Verified to guide Kodit development, which ultimately powers Helix Code Intelligence.

If you'd like to try Helix Code Intelligence, sign up for an account at Helix.ML

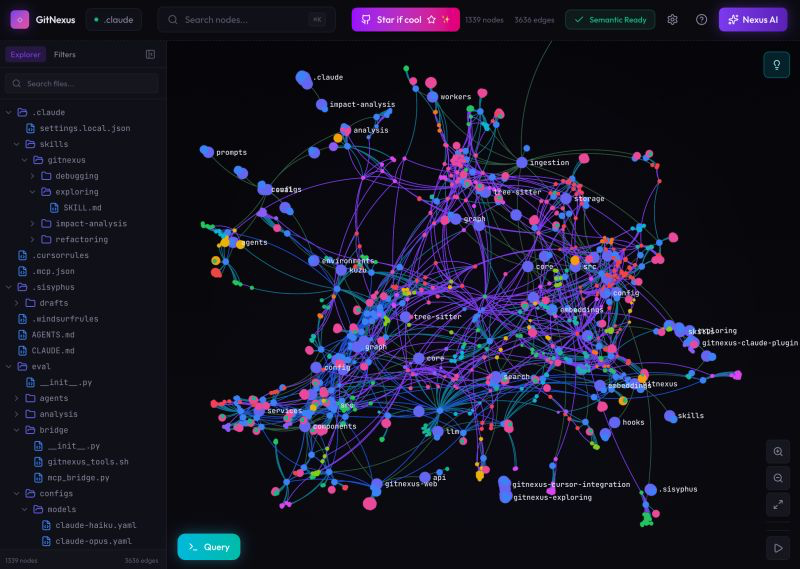

A co-worker shared a hyped-up post about a new project called GitNexus. In summary, it generates a concrete syntax tree of code paths through an application and exposes that as an MCP server. Sound familiar? It's exactly what Kodit used to do. And as is all too common these days, it racked up an unreasonable number of GitHub stars in a suspiciously short amount of time.

🚨 Breaking: Someone just open sourced a knowledge graph engine for your codebase and it's terrifying how good it is.

It's called GitNexus. And it's not a documentation tool.

It's a full code intelligence layer that maps every dependency, call chain, and execution flow in your repo -- then plugs directly into Claude Code, Cursor, and Windsurf via MCP.

Your AI agent has been coding blind. This fixes that.

9.4K GitHub stars. 1.2K forks. Already trending.

I've seen this pattern repeatedly over the past few weeks. Some YC founder pivots, dumps a new project in record time, it gets profiled in a few of the larger tech blogs, goes viral on X, and magically accumulates several thousand stars. Then you never hear about it again. This frustrates me because it trivialises the work people put into normal, boring, genuinely important software, the Apache-style libraries that actually hold things together over the long run. But that's a rant for another day.

The key phrase above was "used to do". Let me explain what changed, and why.

RAG: Chunking vs Slicing

Kodit previously extracted slices of code using a syntax tree to generate self-contained code snippets. My theory was that these slices would be more useful to a coding assistant than a basic RAG chunk, because they'd capture real code structure rather than arbitrarily cutting text mid-function. It took me a long time to actually test this, because, as I wrote last time, benchmarking is hard.

A quick definition of terms for anyone not deep in compiler theory. Program slicing means isolating small but complete chunks of usable code, including their dependencies and relevant context. They're not standalone programs, but they're coherent extracts you could copy and paste and actually understand. Chunking, on the other hand, just splits text into smaller pieces without any awareness of code structure. A chunk might slice straight through a critical function definition without a care in the world.

So slicing sounds better, right? Here's the crux: it isn't.

Benchmarking Slicing vs. Chunking

To compare them properly, I built a benchmark script that downloaded SWE-Bench Verified and ran mini-SWE-agent as the coding assistant, which is the officially sanctioned agent for SWE-Bench tasks.

The setup had three conditions. First, a clean baseline: mini-SWE-agent running with the default SWE-Bench prompt and no Kodit involvement at all. This gives us a reference point that reflects what you'd get from a capable agent with no additional code intelligence layered on top.

The two Kodit conditions shared the same basic structure. For each SWE-Bench task, a script indexed the target repository at the exact git commit specified in that task. This matters because SWE-Bench tests are tied to specific historical snapshots of a codebase, so the index needs to match precisely. The agent was then given MCP-style search capabilities against that index, letting it query for relevant code before attempting a fix. I say MCP-style rather than MCP proper because mini-SWE-agent doesn't ship with native MCP support, so this required a small shim to wire things together.

The difference between the two Kodit conditions was entirely in how the index was built. The pre-1.0 version used program slicing to extract structured code paths from the repository. The post-1.0 version replaced that with straightforward text chunking. Same agent, same prompts, same search interface, different indexing strategy. That isolation is important: it means any performance difference we see should be attributable to the indexing approach rather than anything else.

One thing worth noting is that the agent decides for itself whether to use the search tool at all on any given task. It isn't forced to query Kodit before writing code. This is intentional and realistic: a good coding assistant should be able to judge when additional context is worth fetching and when it can proceed from what it already knows. It does mean, though, that some tasks in the Kodit conditions may have been solved without ever touching the index.

Results:

| Metric | Baseline | Kodit Pre-1.0 (slicing) | Kodit Post-1.0 (chunking) |

|---|---|---|---|

| Instances evaluated | 25 | 25 | 25 |

| Resolved (passed) | 12 | 11 | 15 |

| Resolve rate | 48% | 46% | 60% |

The headline: post-1.0 chunking wins, and it's not particularly close.

A few caveats before anyone gets too excited. Twenty-five instances is not statistically significant. The first batch of tests are almost all from the astropy project, so diversity is limited. And as I noted last time, SWE-Bench isn't really the ideal Kodit benchmark anyway, since Kodit is designed to shine when surfacing results across separate organisational repositories rather than a single open-source project. So treat 60% as a lower bound on real-world performance.

What's genuinely interesting, though, is that the slicing version came in below the baseline. It wasn't just not helpful. It was actively getting in the way.

Coding Assistants Read and Write Files, that's it

Spending time with coding assistants has made one thing very clear to me: they are trained to read and write files. The underlying LLMs were trained on code. The RLHF layer pushed them toward tool use, specifically reading files for context and writing files to produce solutions. That's the loop they're optimised for.

Program slices aren't files. They're synthetic constructs that don't map onto how LLMs actually process information. In my experience watching Helix in action, LLMs are genuinely poor at working with syntax trees. They don't construct a tree representation of a codebase any more than you construct a parse tree of a novel when you read it. You read it, you understand it, you write something new. LLMs do the same thing. Handing them a syntax tree is like handing someone a book's index and expecting them to write a book report from it.

So MCP-based syntax trees? Also not particularly helpful, for the same reason.

The best performance comes from making it easy to surface and access the actual files that are relevant to the task. That's the guiding principle behind both Kodit and Helix Code Intelligence, and the benchmark results are starting to back it up.

What's Next?

Twenty-five tasks is enough to see a signal, but not enough to trust it. SWE-Bench Verified contains 500 instances across a much wider range of projects and problem types, and running Kodit across the full set is the obvious next step.

Time is the constraint. Each run takes meaningful compute and wall-clock time, and right now there are other things competing for both. But getting a statistically robust result across the full benchmark is on the list, and when I get the chance to run it properly I'll write it up here. If the 60% resolve rate holds up at scale across a diverse set of repositories, that will be a genuinely interesting result. And if it doesn't, that'll be interesting too.

Phil Winder builds Kodit, the code intelligence layer that powers Helix Code Intelligence. If you want to try it, sign up at Helix.ML.